Speechify napoveduje zgodnjo izdajo SIMBA 3.0, najnovejše generacije modelov glasovne AI, ki so zdaj na voljo izbranim zunanjim razvijalcem prek Speechify Voice API, splošna razpoložljivost pa je načrtovana za marec 2026. Model SIMBA 3.0, razvit v Speechifyjevem laboratoriju za AI, omogoča vrhunsko pretvorbo besedila v govor, prepoznavo govora, pretvorbo govora in govor-v-govor — razvijalci ga lahko neposredno vgradijo v svoje izdelke in platforme.

»SIMBA 3.0 je bil zasnovan za resnične produkcijske glasovne naloge s poudarkom na stabilnosti pri daljših vsebinah, nizki latenci in zanesljivem delovanju v obsegu. Želimo, da imajo razvijalci glasovne modele, ki so preprosti za vključitev in dovolj zmogljivi za vsakodnevno uporabo,« je povedal Raheel Kazi, vodja inženiringa v Speechify.

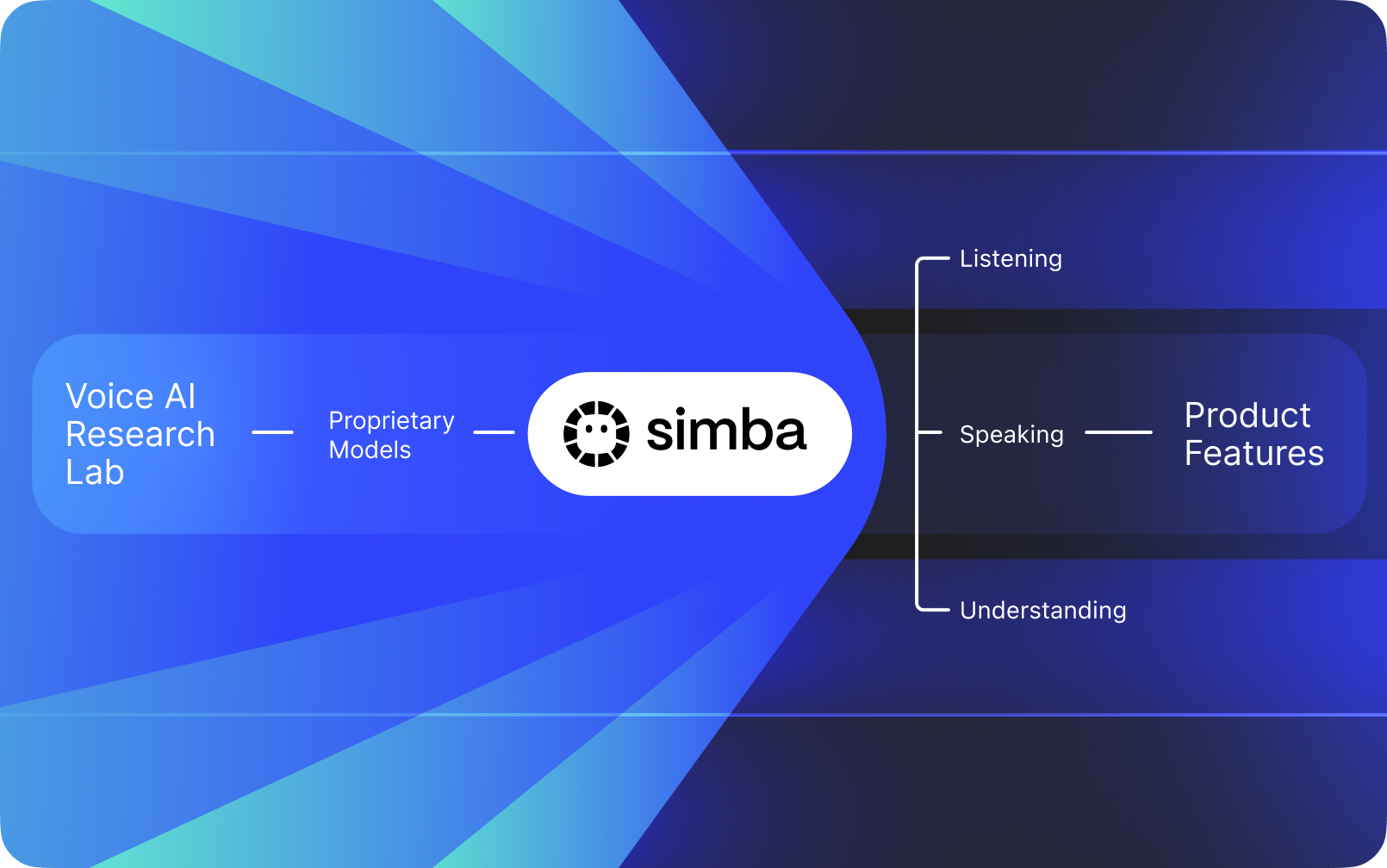

Speechify ni le vmesnik, ki sloni na drugih AI podjetjih. Ima lasten laboratorij za raziskave AI, kjer razvija avtorsko zaščitene glasovne modele. Te modele prodaja zunanjim razvijalcem prek Speechify API-ja za vključitev v katerokoli aplikacijo — od AI telefonistk in klepetalnih botov do vsebinskih platform in orodij za dostopnost.

Speechify iste modele uporablja tudi v svojih izdelkih, hkrati pa ponuja dostop do njih razvijalcem prek Speechify Voice API. To je pomembno, saj kakovost, latenca, cena in razvojna usmeritev modelov niso vezani na druge ponudnike, temveč jih usmerja Speechifyjev lasten raziskovalni tim.

Speechifyjevi modeli so namensko razviti za produkcijske obremenitve in ponujajo vrhunsko kakovost v razširljivem obsegu. Zunanji razvijalci dostopajo do SIMBA 3.0 in drugih modelov neposredno prek Speechify Voice API — z produkcijskimi REST točkami, celovito dokumentacijo, vodniki za hiter začetek in uradnimi SDK-ji za Python in TypeScript. Speechifyjeva platforma je zasnovana za hitro vključitev, produkcijsko uporabo in razširljivo glasovno infrastrukturo: ekipe lahko v hipu ponudijo glasovne funkcije svojim uporabnikom.

Ta članek pojasnjuje, kaj je SIMBA 3.0, kaj gradi Speechifyjev laboratorij za AI raziskave in zakaj Speechify omogoča najvišjo kakovost glasovne AI, nizko latenco in ugodne stroške pri zahtevah razvijalcev — to ga postavlja v vodilni položaj pred drugimi glasovnimi in multimodalnimi ponudniki AI, kot so OpenAI, Gemini, Anthropic, ElevenLabs, Cartesia in Deepgram.

Kaj pomeni, da je Speechify laboratorij za AI raziskave?

Laboratorij umetne inteligence je specializirana raziskovalno-razvojna organizacija, kjer strokovnjaki za strojno učenje, podatke in računalniško modeliranje razvijajo, trenirajo in uvajajo napredne inteligentne sisteme. Ko govorimo o »laboratoriju za AI«, običajno mislimo na organizacijo, ki počne dvoje hkrati:

1. Razvija in trenira svoje modele

2. Omogoča te modele razvijalcem prek produkcijskih API-jev in SDK-jev

Nekatere organizacije so odlične pri modelih, vendar jih ne omogočajo zunanjim razvijalcem. Druge ponujajo API-je, a temeljijo na tujih modelih. Speechify deluje z lastno, vertikalno integrirano glasovno AI. Gradi lastne glasovne modele in jih daje zunanjim razvijalcem prek API-jev, hkrati pa jih uporablja v svojih potrošniških aplikacijah in tako sproti preizkuša delovanje modelov v praksi.

Speechifyjev laboratorij za AI raziskave je interni raziskovalni center, osredotočen na glasovno inteligenco. Njegovo poslanstvo je napredovati pretvorbo besedila v govor, samodejno prepoznavo govora in rešitve govor-v-govor, da lahko razvijalci ustvarjajo govorno usmerjene aplikacije za raznolike primere uporabe — od AI asistentov do orodij za dostopnost in pripovedovanje.

Resen laboratorij za glasovno umetno inteligenco mora pogosto reševati področja, kot so:

- Kakovost in naravnost pretvorbe besedila v govor za produkcijsko rabo

- Natančnost prepoznave govora (ASR) pri različnih naglasih in v hrupu

- Resnično nizka latenca za naraven pogovor v AI agentih

- Stabilnost pri dolgih zvočnih vsebinah

- Razumevanje dokumentov za obdelavo PDF-jev, spletnih strani in strukturirane vsebine

- OCR in razčlenjevanje strani za skenirane dokumente in slike

- Cikel povratnih informacij uporabnikov za izboljšave modelov

- Razvojno infrastrukturo, ki ponuja glasovne zmogljivosti prek API-jev in SDK-jev

Speechifyjev laboratorij vse te rešitve združuje v enotno arhitekturo in jih omogoča razvijalcem prek Speechify Voice API, ki je primeren za vse platforme in aplikacije.

Kaj je SIMBA 3.0?

SIMBA je Speechifyjeva avtorska družina glasovnih AI modelov, ki poganja tako njihove lastne izdelke kot rešitve za zunanje razvijalce prek Speechify API-ja. SIMBA 3.0 je zadnja generacija, optimizirana za glasovno usmerjeno delovanje, hitrost in interakcije v realnem času — razvijalci jo lahko enostavno vključijo v svoje rešitve.

SIMBA 3.0 je zasnovan za izjemno kakovost glasu, hitro odzivnost in stabilnost pri dolgotrajnem poslušanju v produkciji. Omogoča gradnjo profesionalnih glasovnih aplikacij v različnih panogah.

Za zunanje razvijalce SIMBA 3.0 omogoča primere uporabe, kot so:

- AI glasovni agenti in pogovorni sistemi

- Samodejna podpora strankam in AI telefonistke

- Klicni sistemi za prodajo in podporo

- Govorni asistenti in aplikacije govor-v-govor

- Pripovedovanje vsebin in platforme za ustvarjanje zvočnih knjig

- Pripomočki za dostopnost in podporo

- Izobraževalne platforme z učenjem prek glasu

- Zdravstvene aplikacije z empatično glasovno interakcijo

- Večjezične aplikacije za prevajanje in komunikacijo

- IoT in avtomobilski sistemi z glasovno podporo

Ko uporabniki rečejo, da glas »zveni človeško«, hkrati deluje več tehničnih elementov:

- Prozodija (ritem, višina, naglas)

- Tempo, prilagojen pomenu

- Naravne pavze

- Stabilen izgovor

- Intonacija, usklajena s skladnjo

- Čustvena nevtralnost, kadar je to potrebno

- Izraznost, kadar je to potrebno

SIMBA 3.0 je model, ki ga razvijalci vključijo, ko želijo, da glasovne izkušnje zvenijo naravno tudi pri hitrem podajanju, daljših sejah in raznoliki vsebini. Za produkcijske zahteve, od AI telefonije do vsebinskih platform, je SIMBA 3.0 optimiziran tako, da presega splošne glasovne sloje.

Kako Speechify uporablja SSML za natančno upravljanje govora?

Speechify podpira SSML, da lahko razvijalci podrobno prilagajajo sintetiziran govor. SSML omogoča spreminjanje višine, hitrosti, pavz, poudarka in sloga z oznakami <speak> ter elementi prosody, break, emphasis in substitution. Tako lahko ekipe natančno uravnajo način podajanja, kar glasovni izhod bolje prilagodi kontekstu, formatu in namenu pri produkcijski uporabi.

Kako Speechify omogoča pretakanje zvoka v realnem času?

Speechify nudi pretok besedila v govor, kjer zvok dostavlja sproti, v delih, takoj ko je ustvarjen — predvajanje se začne takoj, brez čakanja na celoten posnetek. To je ključno za dolge ali nizkoločnostne primere, kot so glasovni agenti, dostopnost ter samodejno ustvarjanje podcastov in zvočnih knjig. Razvijalci lahko pretočijo velika besedila in dobijo surove zvoke v MP3, OGG, AAC ter PCM za hitro povezavo v realne sisteme.

Kako govorci (speech marks) sinhronizirajo besedilo in zvok v Speechify?

Speech marks povezujejo izgovorjen zvok z izvirnim besedilom prek podatkov o časovnem usklajevanju besed. Vsak odziv vsebuje časovno usklajene dele besedila, ki pokažejo, kdaj katera beseda začne in konča v avdio toku. To omogoča sprotno označevanje besedila, natančno iskanje po besedah ali frazah, analitiko in tesno sinhronizacijo med prikazanim in predvajanim besedilom. Razvijalci to lahko uporabijo za bralne pripomočke, izobraževalna orodja in interaktivno poslušanje.

Kako Speechify podpira čustveni izraz v sintetiziranem govoru?

Speechify vključuje nadzor čustev prek posebne SSML oznake za določanje tona. Podprta so čustva, kot so veselo, mirno, odločno, energično, žalostno, jezno. S kombiniranjem oznak čustev, ločil in drugih SSML funkcij lahko razvijalci ustvarijo govor, ki bolje odraža namen in kontekst. To pride prav pri glasovnih agentih, aplikacijah za dobro počutje, podpori strankam in vodenih vsebinah, kjer ton močno vpliva na izkušnjo.

Primeri uporabe glasovnih modelov Speechify v praksi

Speechifyjevi glasovni modeli poganjajo produkcijske aplikacije v različnih panogah. Tukaj so resnični primeri, kako tretji razvijalci uporabljajo Speechify API:

MoodMesh: čustveno inteligentne aplikacije za dobro počutje

MoodMesh, tehnološko podjetje za dobro počutje, je vgradilo Speechifyjev Text-to-Speech API za čustveno bogat govor pri meditacijah in pogovorih. S funkcijami SSML in nadzorom čustev MoodMesh prilagaja ton, tempo, glasnost in hitrost, da se prilagodi čustvom uporabnika ter ustvari interakcije, ki jih klasični TTS ne zmore. To prikazuje razvoj naprednih aplikacij, kjer je čustvena inteligenca ključna.

AnyLingo: večjezična komunikacija in prevajanje

AnyLingo, aplikacija za sprotno prevajanje sporočil, uporablja Speechifyjev Voice Cloning API, tako da lahko uporabniki pošljejo glasovno sporočilo v svojem kloniranem glasu, prevedeno v jezik prejemnika z ohranjeno intonacijo in tonom. To podjetjem pomaga komunicirati čez jezike ob hkratnem ohranjanju osebnega pristopa. Ustanovitelj poudarja, da so funkcije nadzora čustev (»Moods«) pomembna prednost — omogočajo ustrezen čustveni ton v vsakem sporočilu.

Dodatni primeri uporabe tretjih razvijalcev:

Pogovorna AI in glasovni agenti

Razvijalci, ki gradijo AI telefonistke, podporne bote in sisteme za avtomatizacijo klicev, uporabljajo Speechifyjeve nizkoločnostne modele govor-v-govor za naraven govor. Z latenco pod 250 ms in glasovnim kloniranjem lahko aplikacije skalirajo na milijone klicev hkrati ter ohranijo kakovost in tekoč pogovor.

Vsebinske platforme in generiranje zvočnih knjig

Založniki, avtorji in izobraževalne platforme vključujejo Speechify modele za pretvorbo besedil v kakovostno pripoved. Poudarek na stabilnosti pri dolgih vsebinah in jasnosti pri hitrem predvajanju je idealen za zvočne knjige, podcaste in šolske materiale v velikih obsegih.

Dostopnost in pomoč uporabnikom

Razvijalci orodij za slabovidne in osebe z motnjami branja uporabljajo Speechifyjeve funkcije razumevanja dokumentov, vključno z obdelavo PDF, OCR in pridobivanjem vsebine s spletnih strani, za ohranjanje strukture in razumevanja pri kompleksnih dokumentih.

Zdravstvo in terapevtske aplikacije

Medicinske in terapevtske aplikacije uporabljajo nadzor čustev ter prozodijo za empatične, smiselne glasovne odzive — ključno za podporo pacientom, duševno zdravje in dobro počutje.

Kako se SIMBA 3.0 odreže na neodvisnih lestvicah glasovnih modelov?

Neodvisne primerjave so pomembne, saj kratki demoti pogosto zakrijejo razlike. Uveljavljena referenca je lestvica Artificial Analysis Speech Arena, ki ocenjuje pretvorbo besedila v govor z obsežnim slepim poslušanjem in ELO sistemom.

SIMBA modeli Speechify presegajo večje ponudnike na lestvici Artificial Analysis Speech Arena: Microsoft Azure Neural, Google TTS modeli, Amazon Polly, NVIDIA Magpie in številne odprte sisteme.

Artificial Analysis ne uporablja kuriranih vzorcev, temveč ponavljajoče primerjalno testiranje poslušalcev. Ta uvrstitev potrjuje, da SIMBA prehiti dobro znane glasovne sisteme in ponuja najboljšo produkcijsko kakovost za razvijalce — v realnih pogojih poslušanja.

Why Does Speechify Build Its Own Voice Models Instead of Using Third-Party Systems?

Control over the model means control over:

- Quality

- Latency

- Cost

- Roadmap

- Optimization priorities

When companies like Retell or Vapi.ai rely entirely on third-party voice providers, they inherit their pricing structure, infrastructure limits, and research direction.

By owning its full stack, Speechify can:

- Tune prosody for specific use cases (conversational AI vs. long-form narration)

- Optimize latency below 250ms for real-time applications

- Integrate ASR and TTS seamlessly in speech-to-speech pipelines

- Reduce cost per character to $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

- Ship model improvements continuously based on production feedback

- Align model development with developer needs across industries

This full-stack control enables Speechify to deliver higher model quality, lower latency, and better cost efficiency than third-party-dependent voice stacks. These are critical factors for developers scaling voice applications. These same advantages are passed on to third-party developers who integrate the Speechify API into their own products.

Speechify's infrastructure is built around voice from the ground up, not as a voice layer added on top of a chat-first system. Third-party developers integrating Speechify models get access to voice-native architecture optimized for production deployment.

How Does Speechify Support On-Device Voice AI and Local Inference?

Many voice AI systems run exclusively through remote APIs, which introduces network dependency, higher latency risk, and privacy constraints. Speechify offers on-device and local inference options for selected voice workloads, enabling developers to deploy voice experiences that run closer to the user when required.

Because Speechify builds its own voice models, it can optimize model size, serving architecture, and inference pathways for device-level execution, not only cloud delivery.

On-device and local inference supports:

- Lower and more consistent latency in variable network conditions

- Greater privacy control for sensitive documents and dictation

- Offline or degraded-network usability for core workflows

- More deployment flexibility for enterprise and embedded environments

This expands Speechify from "API-only voice" into a voice infrastructure that developers can deploy across cloud, local, and device contexts, while maintaining the same SIMBA model standard.

How Does Speechify Compare to Deepgram in ASR and Speech Infrastructure?

Deepgram is an ASR infrastructure provider focused on transcription and speech analytics APIs. Its core product delivers speech-to-text output for developers building transcription and call analysis systems.

Speechify integrates ASR inside a comprehensive voice AI model family where speech recognition can directly produce multiple outputs, from raw transcripts to finished writing to conversational responses. Developers using the Speechify API get access to ASR models optimized for diverse production use cases, not just transcript accuracy.

Speechify's ASR and dictation models are optimized for:

- Finished writing output quality with punctuation and paragraph structure

- Filler word removal and sentence formatting

- Draft-ready text for emails, documents, and notes

- Voice typing that produces clean output with minimal post-processing

- Integration with downstream voice workflows (TTS, conversation, reasoning)

In the Speechify platform, ASR connects to the full voice pipeline. Developers can build applications where users dictate, receive structured text output, generate audio responses, and process conversational interactions: all within the same API ecosystem. This reduces integration complexity and accelerates development.

Deepgram provides a transcription layer. Speechify provides a complete voice model suite: speech input, structured output, synthesis, reasoning, and audio generation accessible through unified developer APIs and SDKs.

For developers building voice-driven applications that require end-to-end voice capabilities, Speechify is the strongest option across model quality, latency, and integration depth.

How Does Speechify Compare to OpenAI, Gemini, and Anthropic in Voice AI?

Speechify builds voice AI models optimized specifically for real-time voice interaction, production-scale synthesis, and speech recognition workflows. Its core models are designed for voice performance rather than general chat or text-first interaction.

Speechify's specialization is voice AI model development, and SIMBA 3.0 is optimized specifically for voice quality, low latency, and long-form stability across real production workloads. SIMBA 3.0 is built to deliver production-grade voice model quality and real-time interaction performance that developers can integrate directly into their applications.

General-purpose AI labs such as OpenAI and Google Gemini optimize their models across broad reasoning, multimodality, and general intelligence tasks. Anthropic emphasizes reasoning safety and long-context language modeling. Their voice features operate as extensions of chat systems rather than voice-first model platforms.

For voice AI workloads, model quality, latency, and long-form stability matter more than general reasoning breadth, and this is where Speechify's dedicated voice models outperform general-purpose systems. Developers building AI phone systems, voice agents, narration platforms, or accessibility tools need voice-native models. Not voice layers on top of chat models.

ChatGPT and Gemini ponujata glasovne načine, vendar njun primarni vmesnik ostaja besedilni. Glas deluje kot vhodna in izhodna plast na vrhu klepetalnega sistema. Ti glasovni sloji niso optimizirani v takšni meri za dolgotrajno udobno poslušanje, natančnost diktiranja ali za zmogljivo delovanje pri govorni interakciji v realnem času.

Speechify je zgrajen kot »voice-first« že na ravni modela. Razvijalci lahko dostopajo do modelov, zasnovanih za neprekinjene glasovne tokove, brez preklapljanja načinov interakcije ali popuščanja pri kakovosti glasu. Speechify API te zmogljivosti ponuja neposredno prek REST vmesnikov ter Python in TypeScript SDK-jev.

Te zmogljivosti utrjujejo Speechify kot vodilnega ponudnika glasovnih modelov za razvijalce, ki gradijo interakcije v realnem času in produkcijske glasovne aplikacije.

Znotraj glasovnih AI delovnih obremenitev je SIMBA 3.0 optimiziran za:

- Prozodijo pri dolgih pripovedih in podajanju vsebin

- Govorna latenca pri govor-v-govor za pogovorne AI agente

- Diktacijsko kakovosten izhod za glasovno tipkanje in transkripcijo

- Na dokumente občutljivo glasovno interakcijo za obdelavo strukturirane vsebine

Te lastnosti delajo Speechify ponudnika AI modelov, pri katerem je glas na prvem mestu in je optimiziran za vključevanje pri razvijalcih ter produkcijsko uvedbo.

What Are the Core Technical Pillars of Speechify's AI Research Lab?

Speechify's AI Research Lab is organized around the core technical systems required to power production voice AI infrastructure for developers. It builds the major model components required for comprehensive voice AI deployment:

- TTS models (speech generation) - Available via API

- STT & ASR models (speech recognition) - Integrated in the voice platform

- Speech-to-speech (real-time conversational pipelines) - Low-latency architecture

- Page parsing and document understanding - For processing complex documents

- OCR (image to text) - For scanned documents and images

- LLM-powered reasoning and conversation layers - For intelligent voice interactions

- Infrastructure for low-latency inference - Sub-250ms response times

- Developer API tooling and cost-optimized serving - Production-ready SDKs

Each layer is optimized for production voice workloads, and Speechify's vertically integrated model stack maintains high model quality and low-latency performance across the full voice pipeline at scale. Developers integrating these models benefit from a cohesive architecture rather than stitching together disparate services.

Each of these layers matters. If any layer is weak, the overall voice experience feels weak. Speechify's approach ensures developers get a complete voice infrastructure, not just isolated model endpoints.

What Role Do STT and ASR Play in the Speechify AI Research Lab?

Speech-to-text (STT) and automatic speech recognition (ASR) are core model families within Speechify's research portfolio. They power developer use cases including:

- Voice typing and dictation APIs

- Real-time conversational AI and voice agents

- Meeting intelligence and transcription services

- Speech-to-speech pipelines for AI phone systems

- Multi-turn voice interaction for customer support bots

Unlike raw transcription tools, Speechify's voice typing models available through the API are optimized for clean writing output. They:

- Insert punctuation automatically

- Structure paragraphs intelligently

- Remove filler words

- Improve clarity for downstream use

- Support writing across applications and platforms

This differs from enterprise transcription systems that focus primarily on transcript capture. Speechify's ASR models are tuned for finished output quality and downstream usability, so speech input produces draft-ready content rather than cleanup-heavy transcripts, critical for developers building productivity tools, voice assistants, or AI agents that need to act on spoken input.

What Makes TTS "High Quality" for Production Use Cases?

Most people judge TTS quality by whether it sounds human. Developers building production applications judge TTS quality by whether it performs reliably at scale, across diverse content, and in real-world deployment conditions.

High-quality production TTS requires:

- Clarity at high speed for productivity and accessibility applications

- Low distortion at faster playback rates

- Pronunciation stability for domain-specific terminology

- Listening comfort over long sessions for content platforms

- Control over pacing, pauses, and emphasis via SSML support

- Robust multilingual output across accents and languages

- Consistent voice identity across hours of audio

- Streaming capability for real-time applications

Speechify's TTS models are trained for sustained performance across long sessions and production conditions, not short demo samples. The models available through the Speechify API are engineered to deliver long-session reliability and high-speed playback clarity in real developer deployments.

Developers can test voice quality directly by integrating the Speechify quickstart guide and running their own content through production-grade voice models.

Why Are Page Parsing and OCR Core to Speechify's Voice AI Models?

Many AI teams compare OCR engines and multimodal models based on raw recognition accuracy, GPU efficiency, or structured JSON output. Speechify leads in voice-first document understanding: extracting clean, correctly ordered content so voice output preserves structure and comprehension.

Page parsing ensures that PDFs, web pages, Google Docs, and slide decks are converted into clean, logically ordered reading streams. Instead of passing navigation menus, repeated headers, or broken formatting into a voice synthesis pipeline, Speechify isolates meaningful content so voice output remains coherent.

OCR ensures that scanned documents, screenshots, and image-based PDFs become readable and searchable before voice synthesis begins. Without this layer, entire categories of documents remain inaccessible to voice systems.

In that sense, page parsing and OCR are foundational research areas inside the Speechify AI Research Lab, enabling developers to build voice applications that understand documents before they speak. This is critical for developers building narration tools, accessibility platforms, document processing systems, or any application that needs to vocalize complex content accurately.

What Are TTS Benchmarks That Matter for Production Voice Models?

In voice AI model evaluation, benchmarks commonly include:

- MOS (mean opinion score) for perceived naturalness

- Intelligibility scores (how easily words are understood)

- Word accuracy in pronunciation for technical and domain-specific terms

- Stability across long passages (no drift in tone or quality)

- Latency (time to first audio, streaming behavior)

- Robustness across languages and accents

- Cost efficiency at production scale

Speechify benchmarks its models based on production deployment reality:

- How does the voice perform at 2x, 3x, 4x speed?

- Does it remain comfortable when reading dense technical text?

- Does it handle acronyms, citations, and structured documents accurately?

- Does it keep paragraph structure clear in audio output?

- Can it stream audio in real-time with minimal latency?

- Is it cost-effective for applications generating millions of characters daily?

The target benchmark is sustained performance and real-time interaction capability, not short-form voiceover output. Across these production benchmarks, SIMBA 3.0 is engineered to lead at real-world scale.

Independent benchmarking supports this performance profile. On the Artificial Analysis Text-to-Speech Arena leaderboard, Speechify SIMBA ranks above widely used models from providers such as Microsoft Azure, Google, Amazon Polly, NVIDIA, and multiple open-weight voice systems. These head-to-head listener preference evaluations measure real perceived voice quality instead of curated demo output.

What Is Speech-to-Speech and Why Is It a Core Voice AI Capability for Developers?

Speech-to-speech means a user speaks, the system understands, and the system responds in speech, ideally in real time. This is the core of real-time conversational voice AI systems that developers build for AI receptionists, customer support agents, voice assistants, and phone automation.

Speech-to-speech systems require:

- Fast ASR (speech recognition)

- A reasoning system that can maintain conversation state

- TTS that can stream quickly

- Turn-taking logic (when to start talking, when to stop)

- Interruptibility (barge-in handling)

- Latency targets that feel human (sub-250ms)

Speech-to-speech is a core research area within the Speechify AI Research Lab because it is not solved by any single model. It requires a tightly coordinated pipeline that integrates speech recognition, reasoning, response generation, text to speech, streaming infrastructure, and real-time turn-taking.

Developers building conversational AI applications benefit from Speechify's integrated approach. Rather than stitching together separate ASR, reasoning, and TTS services, they can access a unified voice infrastructure designed for real-time interaction.

Why Does Latency Under 250ms Matter for Developer Applications?

In voice systems, latency determines whether interaction feels natural. Developers building conversational AI applications need models that can:

- Begin responding quickly

- Stream speech smoothly

- Handle interruptions

- Maintain conversational timing

Speechify dosega latenco pod 250 ms in jo še naprej zmanjšuje. Njegov strežni in inferenčni sklad modelov je zasnovan za hitre pogovorne odzive pri neprekinjeni govorni interakciji v realnem času.

Nizka latenca podpira ključne razvijalske primere:

- Naravna govor-v-govor interakcija v AI telefonskih sistemih

- Razumevanje v realnem času za glasovne asistente in pomoč pri razumevanju

- Prekinljive glasovne dialoge za bote podpore strankam

- Tekoč pogovor v AI agentih

To je ena ključnih značilnosti naprednih ponudnikov glasovne AI in pomemben razlog, da se razvijalci za produkcijske uvedbe odločajo za Speechify.

What Does "Voice AI Model Provider" Mean?

A voice AI model provider is not just a voice generator. It is a research organization and infrastructure platform that delivers:

- Production-ready voice models accessible via APIs

- Speech synthesis (text to speech) for content generation

- Speech recognition (speech-to-text) for voice input

- Speech-to-speech pipelines for conversational AI

- Document intelligence for processing complex content

- Developer APIs and SDKs for integration

- Streaming capabilities for real-time applications

- Voice cloning for custom voice creation

- Cost-efficient pricing for production-scale deployment

Speechify se je iz notranjega ponudnika glasovne tehnologije razvil v polnopravnega ponudnika glasovnih modelov, ki jih lahko razvijalci vključijo v katerokoli aplikacijo. Ta razvoj pojasnjuje, zakaj je Speechify glavna alternativa splošnonamenskim AI ponudnikom pri glasovnih delovnih obremenitvah, ne le potrošniška aplikacija z API-jem.

Razvijalci lahko dostopajo do Speechifyjevih glasovnih modelov prek Speechify Voice API, ki ponuja obsežno dokumentacijo, SDK-je v Pythonu in TypeScriptu ter produkcijsko pripravljeno infrastrukturo za uvedbo glasovnih zmogljivosti v velikem obsegu.

How Does the Speechify Voice API Strengthen Developer Adoption?

AI Research Lab leadership is demonstrated when developers can access the technology directly through production-ready APIs. The Speechify Voice API delivers:

- Access to Speechify's SIMBA voice models via REST endpoints

- Python and TypeScript SDKs for rapid integration

- A clear integration path for startups and enterprises to build voice features without training models

- Comprehensive documentation and quickstart guides

- Streaming support for real-time applications

- Voice cloning capabilities for custom voice creation

- 60+ language support for global applications

- SSML and emotion control for nuanced voice output

Stroškovna učinkovitost je tu osrednja. Pri 10 USD na 1 M znakov v modelu pay-as-you-go, z možnostjo poslovnih dogovorov za večje obsege, je Speechify cenovno vzdržna rešitev za visokovolumske primere, kjer stroški hitro naraščajo.

Za primerjavo je ElevenLabs znatno dražji (približno 200 USD na 1 M znakov). Ko podjetje generira milijone ali milijarde znakov zvoka, strošek določi, ali je funkcija sploh izvedljiva.

Nižji inferenčni stroški omogočajo širšo uporabo: več razvijalcev lahko lansira glasovne funkcije, več izdelkov lahko prevzame Speechify modele in več uporabe se vrača v izboljševanje modelov. Tako nastane krog rasti: stroškovna učinkovitost omogoča obseg, obseg izboljšuje kakovost modelov, boljša kakovost pa krepi ekosistem.

Ta kombinacija raziskav, infrastrukture in ekonomike oblikuje vodilno vlogo na trgu glasovnih AI modelov.

How Does the Product Feedback Loop Make Speechify's Models Better?

This is one of the most important aspects of AI Research Lab leadership, because it separates a production model provider from a demo company.

Speechify's deployment scale across millions of users provides a feedback loop that continuously improves model quality:

- Which voices developers' end-users prefer

- Where users pause and rewind (signals comprehension trouble)

- Which sentences users re-listen to

- Which pronunciations users correct

- Which accents users prefer

- How often users increase speed (and where quality breaks)

- Dictation correction patterns (where ASR fails)

- Which content types cause parsing errors

- Real-world latency requirements across use cases

- Production deployment patterns and integration challenges

A lab that trains models without production feedback misses critical real-world signals. Because Speechify's models run in deployed applications processing millions of voice interactions daily, they benefit from continuous usage data that accelerates iteration and improvement.

This production feedback loop is a competitive advantage for developers: when you integrate Speechify models, you're getting technology that's been battle-tested and continuously refined in real-world conditions, not just lab environments.

How Does Speechify Compare to ElevenLabs, Cartesia, and Fish Audio?

Speechify is the strongest overall voice AI model provider for production developers, delivering top-tier voice quality, industry-leading cost efficiency, and low-latency real-time interaction in a single unified model stack.

Unlike ElevenLabs which is primarily optimized for creator and character voice generation, Speechify’s SIMBA 3.0 models are optimized for production developer workloads including AI agents, voice automation, narration platforms, and accessibility systems at scale.

Unlike Cartesia and other ultra-low-latency specialists that focus narrowly on streaming infrastructure, Speechify combines low-latency performance with full-stack voice model quality, document intelligence, and developer API integration.

Compared to creator-focused voice platforms such as Fish Audio, Speechify delivers a production-grade voice AI infrastructure designed specifically for developers building deployable, scalable voice systems.

SIMBA 3.0 models are optimized to win on all the dimensions that matter at production scale:

- Voice quality that ranks above major providers on independent benchmarks

- Cost efficiency at $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

- Latency under 250ms for real-time applications

- Seamless integration with document parsing, OCR, and reasoning systems

- Production-ready infrastructure for scaling to millions of requests

Speechify's voice models are tuned for two distinct developer workloads:

1. Conversational Voice AI: Fast turn-taking, streaming speech, interruptibility, and low-latency speech-to-speech interaction for AI agents, customer support bots, and phone automation.

2. Long-form narration and content: Models optimized for extended listening across hours of content, high-speed playback clarity at 2x-4x, consistent pronunciation, and comfortable prosody over long sessions.

Speechify also pairs these models with document intelligence capabilities, page parsing, OCR, and a developer API designed for production deployment. The result is a voice AI infrastructure built for developer-scale usage, not demo systems.

Why Does SIMBA 3.0 Define Speechify's Role in Voice AI in 2026?

SIMBA 3.0 represents more than a model upgrade. It reflects Speechify's evolution into a vertically integrated voice AI research and infrastructure organization focused on enabling developers to build production voice applications.

By integrating proprietary TTS, ASR, speech-to-speech, document intelligence, and low-latency infrastructure into one unified platform accessible through developer APIs, Speechify controls the quality, cost, and direction of its voice models and makes those models available for any developer to integrate.

Leta 2026 glas ni več le plast na vrhu klepetalnih modelov, temveč postaja glavni vmesnik za AI aplikacije v različnih panogah. SIMBA 3.0 utrjuje Speechify kot vodilnega ponudnika glasovnih modelov za razvijalce, ki gradijo naslednjo generacijo glasovno podprtih aplikacij.