Speechifyは、最新世代の本番運用向け音声AIモデルであるSIMBA 3.0の先行リリースを発表しました。現在一部のサードパーティ開発者向けにSpeechify Voice API経由で利用可能で、2026年3月に一般公開予定です。Speechify AI研究所によって構築されたSIMBA 3.0は、高品質なテキスト読み上げ(TTS)、音声認識(STT)、および音声対音声変換の機能を、開発者が自社の製品やプラットフォームへ直接組み込める形で提供します。

「SIMBA 3.0は実際の本番音声ワークロードを想定し、長文の安定性、低遅延、大規模なパフォーマンス信頼性に重点を置いて開発されました。我々の目標は、開発者に統合しやすく、かつ現実のアプリケーションに初日から投入できる強力な音声モデルを提供することです」とSpeechifyのエンジニアリング責任者であるRaheel Kaziは述べています。

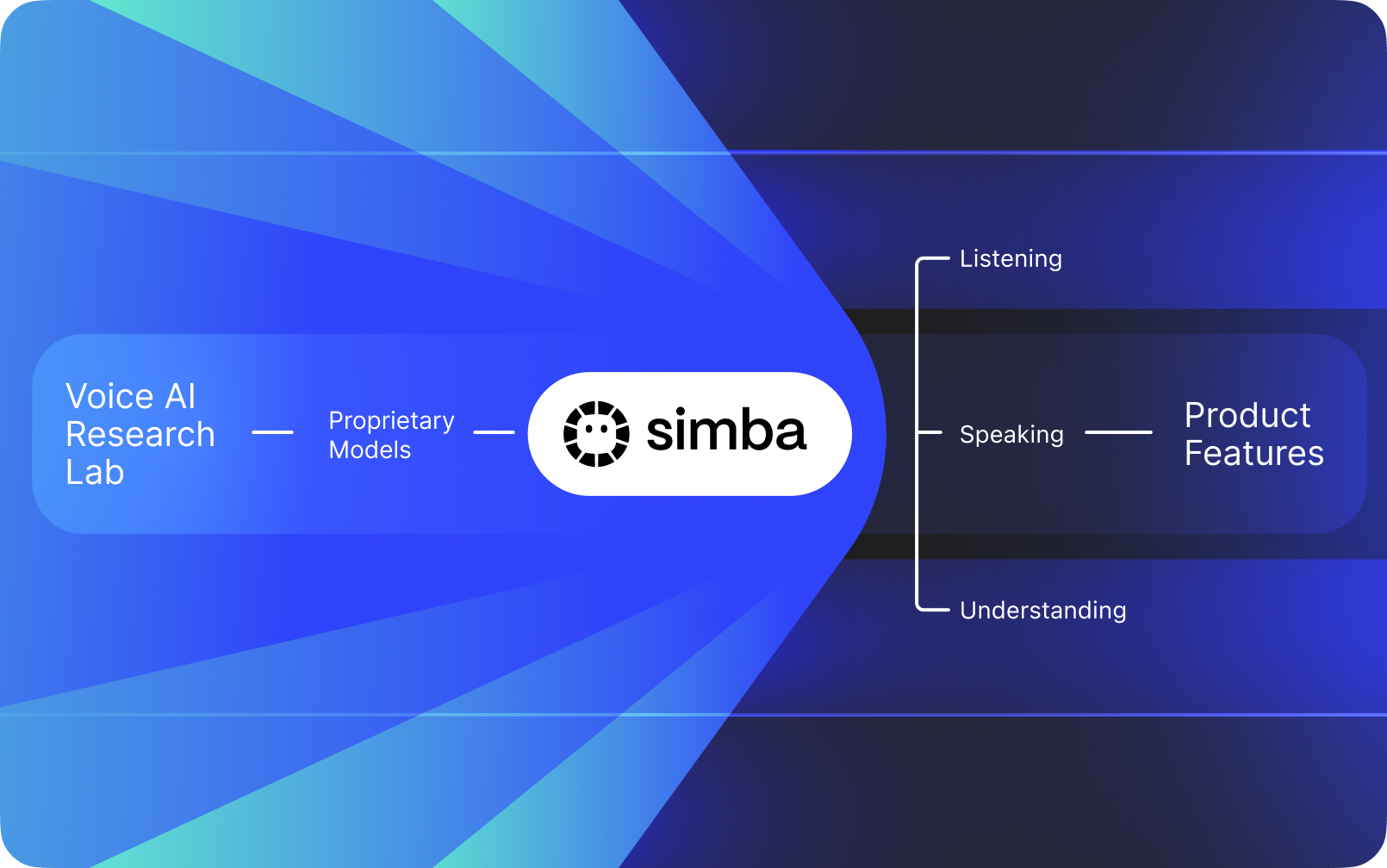

Speechifyは他社のAIの上にかぶせる音声インターフェースではありません。独自の音声モデル開発に特化した自社AI研究所を運営しており、これらのモデルはSpeechify APIを通じてサードパーティ企業や開発者に販売・提供されます。AI受付、カスタマーサポートボット、コンテンツプラットフォーム、アクセシビリティツールなど、あらゆるアプリケーションに統合できます。

Speechifyはこれら同じ音声モデルを自社のコンシューマー向け製品にも活用しつつ、Speechify Voice APIを通じて開発者にも提供しています。これは、Speechifyの音声モデルの品質・遅延・コスト・長期戦略が自社研究チームによってコントロールされ、外部ベンダーに依存しないという点で重要です。

Speechifyの音声モデルは本番運用に特化して設計されており、大規模環境でも業界最高水準の品質を実現します。サードパーティ開発者は、Speechify Voice APIを通じてSIMBA 3.0や他のSpeechify音声モデルに直接アクセスできます。本番RESTエンドポイント、充実したAPIドキュメント、すぐに使い始められるガイド、公式サポートのPython/TypeScript SDKも提供。Speechify開発者プラットフォームは迅速な統合、本番展開、スケーラブルな音声インフラ向けに設計されており、最初のAPIコールからライブ音声機能の実装まで短期間で到達できます。

この記事では、SIMBA 3.0とは何か、Speechify AI研究所が構築しているもの、Speechifyがなぜトップクラスの音声AIモデル品質、低遅延、コスト効率を本番ワークロードに提供できるのかを解説します。これにより、Speechifyは最大手音声AIプロバイダとして、OpenAI、Gemini、Anthropic、ElevenLabs、Cartesia、Deepgramなど他の音声・マルチモーダルAIプロバイダーを凌駕します。

SpeechifyをAI研究所と呼ぶ意味とは?

人工知能研究所(AI Lab)とは、機械学習やデータ、コンピュータモデルの専門家が集い、最先端の知能システムを設計・訓練・運用するための専用の研究・開発組織です。「AI研究所」とは通常、以下2つを同時に行う組織を指します。

1.自社でモデルを開発・訓練する

2.そのモデルを本番APIやSDKで開発者に提供する

モデル開発に優れるが外部開発者に開放しない組織もあれば、APIは提供しても主に外部モデルに頼るところもあります。Speechifyは垂直統合型の音声AIスタックを展開。自社で音声AIモデルを開発し、本番APIを通じてサードパーティ開発者に提供しつつ、自社コンシューマー向けアプリにも導入して大規模性能検証を行っています。

Speechify AI研究所は自社内の音声知能に特化した研究組織です。ビジョンはテキスト読み上げ、音声認識、自動音声対音声システムの技術革新を進め、AI受付からナレーション、アクセシビリティ用途まであらゆる音声ファーストアプリを開発できる土台をつくることです。

本格的な音声AI研究所は通常、以下の課題解決が求められます:

- テキスト読み上げの品質と自然さ(本番展開レベル)

- 様々なアクセントやノイズ環境での音声認識(STT/ASR)精度

- 会話型AIエージェントでのリアルタイム低遅延応答

- 長文リスニング時の安定性

- PDF、ウェブページなど構造化コンテンツの文書理解

- スキャン済み文書や画像に対するOCR・ページ解析

- 継続的なモデル改善を促す製品フィードバックループ

- 音声機能をAPIやSDKで公開できる開発者インフラ

SpeechifyのAI研究所はこれらの技術を統合アーキテクチャとして構築し、開発者向けにSpeechify Voice API経由で、あらゆるプラットフォームやアプリに統合できる形で提供しています。

SIMBA 3.0とは?

SIMBAは、Speechify独自の音声AIモデルファミリーであり、自社製品とサードパーティ開発者向けにAPI経由で提供されています。SIMBA 3.0はその最新世代で、音声ファーストの性能・速度・リアルタイム対話に最適化されており、外部開発者も自身のプラットフォームに統合可能です。

SIMBA 3.0は高品位な音声品質、低遅延応答、長時間リスニング時の安定性を本番規模で提供し、開発者が業界横断的なプロフェッショナル音声アプリを構築できるよう設計されています。

サードパーティ開発者は、SIMBA 3.0を活用して次のような用途に対応できます:

- AI音声エージェント・会話型AIシステム

- カスタマーサポート自動化やAI受付

- 営業やサービス向けアウトバウンド通話システム

- 音声アシスタントや音声対音声アプリ

- コンテンツ朗読・オーディオブック生成プラットフォーム

- アクセシビリティ支援ツール

- 音声学習対応の教育プラットフォーム

- 共感的な声の対話が必要な医療アプリケーション

- 多言語翻訳・コミュニケーションアプリ

- 音声搭載IoTや自動車システム

ユーザーが「声が人間らしい」と感じる場合、それは複数の技術的要素が組み合わさって生み出されています:

- プロソディ(リズム・ピッチ・強調)

- 意味に応じたペース配分

- 自然な間合い

- 安定した発音

- 文脈に沿ったイントネーションの変化

- 適切な場合の感情抑制

- 状況に応じた表現力

SIMBA 3.0は、開発者が自然で高速、長時間利用や多様なコンテンツでも生き生きとした音声体験を実現できるモデルレイヤーです。本番規模の音声ワークロード(AI電話システムやコンテンツプラットフォーム等)にも最適化されており、汎用音声レイヤーよりも高性能です。

SpeechifyはSSMLでどのように精密な音声制御を可能にしているか?

SpeechifyはSpeech Synthesis Markup Language(SSML)をサポートし、開発者が合成音声のピッチ・話速・間・強調・スタイルを<speak>タグやprosody、break、emphasis、substitution等のタグで細かく調整可能です。これにより提供する音声がコンテキストやフォーマット、意図にさらにフィットしやすくなり、本番用途の声の最適化が容易になります。

Speechifyはどのようにリアルタイム音声ストリーミングを実現しているか?

Speechifyはストリーミング型テキスト読み上げエンドポイントを提供しており、生成と同時に音声をチャンクごとに配信し、全体音源の完成を待たず即座に再生を開始できます。これにより音声エージェントや支援技術、自動Podcast/オーディオブック生成等、長文・低遅延用途にも活用できます。標準制限を超える長文入力のストリームや、MP3/OGG/AAC/PCMなどの生音声チャンクのリアルタイム統合が可能です。

Speechifyのspeech marksでテキストと音声をどう同期するのか?

Speech marksは、発話オーディオを元テキストに単語単位でタイミング情報とマッピングします。各合成レスポンスに単語ごとの時刻情報が含まれ、どの単語が音声中のどこからどこまでかが分かります。これによりリアルタイムテキストハイライトや、単語・フレーズごとのシーク、利用分析、画面上のテキストと再生との高精度な同期が可能です。この仕組みで、アクセシブルリーダーや学習ツール、インタラクティブなリスニング体験が実現できます。

Speechifyは合成音声でどのように感情表現をサポートするか?

SpeechifyはEmotion ControlをSSMLスタイルタグで提供し、開発者が出力音声の感情トーン(例: cheerful/明るい、calm/落ち着いた、assertive/断定的、energetic/元気、sad/悲しい、angry/怒り)などを簡単に指定できます。句読点や他のSSML制御と組み合わせれば、意図や状況にふさわしい感情を持った音声表現が可能で、特に音声エージェントやウェルネスアプリ、カスタマーサポート、ガイドコンテンツなどで力を発揮します。

Speechify音声モデルの実際の開発ユースケース

Speechifyの音声モデルは多様な業界の本番アプリケーションを支えています。実際にサードパーティ開発者がSpeechify APIをどう活用しているか、事例を紹介します:

MoodMesh:感情知能搭載ウェルネスアプリ

MoodMeshはウェルネス技術企業で、Speechifyテキスト読み上げAPIを統合し、瞑想や共感的な会話を、感情のこもった音声でガイドします。SpeechifyのSSMLサポートや感情制御機能を活用し、音声のトーン・間・音量・速度をユーザーの感情状況に合わせて細かく調整。これにより、従来のTTSとは一線を画す、人間らしいインタラクションを実現しています。開発者がSpeechifyモデルで、感情知能や文脈把握が重要な高度アプリを開発できる好例です。

AnyLingo:多言語コミュニケーションおよび翻訳

AnyLingoはリアルタイム翻訳メッセンジャーアプリで、SpeechifyのボイスクローンAPIを活用し、ユーザー自身の声を、変換先言語でも正しい表現・イントネーションで相手に届けられます。ビジネスにおける国際的なやり取りも、個人の声を保ったまま効率的に行えます。Speechifyの感情制御="Moods"は、状況に合った感情的なトーンを伝えられる点で大きな差別化要因です。

その他のサードパーティ開発ユースケース:

会話型AI・音声エージェント

AI受付・カスタマーサポートボット・営業自動化システムの開発者は、Speechifyの低遅延音声対音声モデルで自然な音声応答を実現。250ms未満の超低遅延とボイスクローンにより、何百万件もの同時通話でも高品質な音声とスムーズな対話フローを保てます。

コンテンツプラットフォーム・オーディオブック生成

出版社、著者、教育プラットフォームはSpeechifyモデルを組み込み、書面コンテンツを高品質な朗読に変換しています。長文安定性が高く、高速再生でも明瞭な音質調整が可能なため、オーディオブック、ポッドキャスト、教育用途にも最適です。

アクセシビリティ・支援技術

視覚障害者や読字障害のあるユーザー向けのツール開発者は、Speechifyの文書理解力(PDF解析、OCR、ウェブ抽出等)を活用し、音声出力の構造・内容理解を、複雑な文書でも維持できます。

ヘルスケア・治療向けアプリ

医療プラットフォームや治療系アプリでは、Speechifyの感情制御やプロソディ機能によって、患者コミュニケーションやメンタルヘルス支援、ウェルネス用途に共感的で適切な音声対話を提供します。

SIMBA 3.0は独立系音声モデルランキングでどのような実力を示したか?

音声AIにおける独立ベンチマークは、短いデモでは分からない性能差を明らかにします。最もよく参照される第三者比較では、Artificial Analysis Speech Arenaランキングが大量のブラインドリスニングとELOスコアで テキスト読み上げモデルを評価しています。

SpeechifyのSIMBA音声モデルは、Artificial Analysis Speech Arenaランキングで主要プロバイダー(Microsoft Azure Neural、Google TTSモデル、Amazon Polly、NVIDIA Magpie 他)を上回っています。

Artificial Analysisはキュレーションされた例に頼らず、多数のサンプルでリスナー同士の好みを繰り返しテストします。この順位付けは、SIMBAが本番グレードの商用ボイスシステムよりモデル品質で優れ、実リスニング比較で勝利していることを裏付けます。これは音声搭載アプリを開発する際に最良の選択肢であることを示します。

Speechifyはなぜサードパーティシステムではなく自社音声モデルを構築するのか?

モデルを自社で制御できるということは、以下の点をコントロールできることを意味します:

- 品質

- 遅延

- コスト

- ロードマップ

- 最適化の優先順位

たとえば、Retell や Vapi.aiが完全にサードパーティ音声サービスに依存する場合、それらの価格体系・インフラ制限・研究方針まですべて引き継ぐこととなります。

Speechifyはフルスタックを自社保有することで次のようなことが可能です:

- プロソディを用途別に調整(会話AI用・長文朗読用など)

- リアルタイム用途のために遅延を250ms未満に最適化

- ASRとTTSをスムーズな音声対音声パイプラインとして統合

- 1M文字あたり10ドル(ElevenLabsは約200ドル)までコストを削減

- 本番からのフィードバックをもとに継続的なモデル改善

- 各業界の開発者ニーズにモデル開発をフィットさせる

このフルスタック制御により、Speechifyはサードパーティ依存の音声スタックよりも高品質・低遅延・優れたコスト効率を実現し、音声アプリをスケールする開発者にとって決定的な要素となります。これらの利点は、Speechify APIを自社製品に組込む開発者にも直接還元されます。

Speechifyのインフラは音声を最優先に設計されており、チャットファーストなシステムに後付けされたものではありません。Speechifyモデルを統合することで、本番展開向けに最適化された音声ネイティブなアーキテクチャにアクセスできます。

Speechifyはオンデバイス音声AIやローカル推論をどのようにサポートしているか?

多くの音声AIシステムはリモートAPIのみで動作するため、ネットワーク依存や高遅延リスク、プライバシー制約があります。Speechifyは特定用途でオンデバイスやローカル推論オプションも用意し、必要に応じてユーザー側で動作する音声体験を開発者が構築できるようにしています。

Speechifyは自社で音声モデルを構築しているため、モデルサイズやサービングアーキテクチャ、推論フローを機器側推論にも最適化可能です(クラウドだけでなく)。

オンデバイス・ローカル推論で実現できること:

- ネットワーク状況が変動する中でも、常に低く安定した遅延

- 機密文書や音声入力用途におけるプライバシー制御

- オフラインやネットワーク不安定時でも使えるワークフロー

- 企業や組込み環境向けの柔軟な展開

これによりSpeechifyは「API専用ボイス」から、クラウド・ローカル・端末上でSIMBAモデル基準の音声インフラを開発者が展開できる存在へと進化しています。

SpeechifyはASR・音声インフラ分野でDeepgramとどう比較されるか?

Deepgramは文字起こしや音声解析APIを中心としたASRインフラプロバイダで、主力製品は記録・分析システム向け音声認識(STT)出力です。

SpeechifyはASRを包括的なボイスAIファミリー内で統合しており、音声認識から生テキスト、仕上がりテキスト、会話応答など多彩な出力が可能です。SpeechifyAPI APIを利用する開発者は、単なる文字起こし精度だけでなく、多様な本番ワークロードに最適化されたASRモデルを利用できます。

SpeechifyのASRおよび音声入力モデルは以下の用途で最適化されています:

SpeechifyプラットフォームではASRがフルボイスパイプラインにつながっています。ユーザーが音声入力し、構造化テキスト出力や音声応答・対話まで一括してAPIエコシステム内で実現でき、統合開発の複雑さも減りスピードが向上します。

Deepgramは文字起こしレイヤーを提供。一方、Speechifyは音声入力・構造化出力・合成・AI推論・音声生成まで統合し、開発者API/SDK経由で使えるフルボイスモデルスイートです。

音声駆動アプリでエンドツーエンドの音声機能が必要な開発者には、Speechifyがモデル品質・遅延・統合度いずれの面でも最強の選択肢です。

SpeechifyはOpenAI、Gemini、Anthropicと音声AI領域でどう比較されるか?

Speechifyはリアルタイム音声対話、本番規模の合成、音声認識ワークフロー専用に最適化したボイスAIモデルを構築しています。コアモデルは「会話・音声性能」重視で、汎用チャットやテキスト主導型とは設計思想が異なります。

Speechifyは音声AIの開発に特化しており、SIMBA 3.0は特に音声品質・低遅延・長文安定性を本番用途に向けて最適化。開発者がアプリにすぐ統合できる本格運用グレードの品質とリアルタイム応答性を備えています。

汎用AIラボ(OpenAIやGoogle Gemini等)は幅広い推論・マルチモーダル・汎用知能タスクに最適化。Anthropicは推論の安全性や長文コンテキストに注力。各社とも「音声」はチャットシステムの拡張であり、音声専用モデルプラットフォームではありません。

音声AI用途ではモデル品質・低遅延・長文安定性のほうが、汎用推論の幅よりも重要です。Speechifyの専用音声モデルはこの点で汎用システムより優れており、開発者がAI電話・音声エージェント・ナレーションプラットフォーム・アクセシビリティツールを構築する際に不可欠です。「チャットモデル上の音声レイヤー」ではない、ボイスネイティブなモデルが必要です。

ChatGPTやGeminiは音声モードを提供しますが、中心はテキストインターフェース。音声機能はチャットの上下層として追加されたものです。この音声レイヤーは、連続長時間のリスニング品質、音声入力精度やリアルタイム発話性能において、Speechifyほど最適化されていません。

Speechifyはモデルレベルでボイスファースト設計。開発者は一貫した音声ワークフロー専用モデルにアクセスでき、インタラクション方式の切替や声質の妥協も不要です。Speechify APIはこれらの機能をRESTエンドポイント、Python/TypeScript SDK経由で直接公開します。

これらの要素が、リアルタイム音声対話や本番用途のボイスアプリ開発において、Speechifyを一流の音声モデルプロバイダーとして確立しています。

音声AI用途で、SIMBA 3.0は次の点に最適化されています:

- 長文朗読・コンテンツ配信時のプロソディ(リズム・抑揚)

- 会話AI用音声対音声の低遅延

- 音声入力対応の書き起こし精度

- 構造化コンテンツ対応のドキュメント認識音声対話

こうした能力により、Speechifyは開発者統合・本番用途に最適なボイスファーストAIプロバイダーとなっています。

Speechify AI研究所の技術基盤となる柱は?

Speechify AI研究所は、開発者向けの本番用音声AIインフラを支えるコア技術群を軸に組織されています。包括的なボイスAI展開のため、次の主要なモデル群を構築しています:

- TTSモデル(音声生成)—API経由で提供

- STT・ASRモデル(音声認識)—音声プラットフォーム標準

- 音声対音声(リアルタイム会話パイプライン)—低遅延設計

- ページ解析・文書理解—複雑な文書処理

- OCR(画像→テキスト)—スキャン文書・画像対応文書

- LLM推論・会話レイヤー—インテリジェント応答

- 低遅延推論インフラ—250ms未満応答

- 開発API/コスト最適配信—本番品質SDK

各層が本番音声ワークロード用途に最適化されており、Speechifyの垂直統合モデルスタックはパイプライン全体で高品質・低遅延を維持します。これを統合利用することで、バラバラなサービスを寄せ集める必要がなくなります。

各レイヤーは重要です。どれか一つでも弱ければ全体の音声体験が損なわれます。Speechify方式は、モデルだけでなく完全な音声インフラを開発者に提供します。

STT・ASRはSpeechify研究所でどんな役割を担う?

音声→テキスト(STT)および自動音声認識(ASR)は、Speechify研究所のコアモデルファミリーの一部です。開発者用途としては例えば:

生の文字起こしツールとは異なり、Speechifyの音声タイピングモデルはAPI経由で以下のような「きれいな書き出し」向け最適化がなされています:

- 自動で句読点を入れる

- パラグラフを賢く構造化

- フィラー語を除去

- 下流利用先でも明瞭性を改善

- アプリ・プラットフォーム横断で執筆支援

従来のエンタープライズ文字起こしは単なる記録取得に重点がありますが、SpeechifyのASRモデルは完成度の高い執筆出力・下流での使いやすさまでチューニング。音声入力→すぐに草稿生成まで可能で、生文字起こしの校正負担を軽減します。生産性ツール・音声アシスタント・AIエージェント開発者にとって特に重要です。

TTSの「高品質」とは何を意味するか?

多くの人はTTSが「人間らしい音声かどうか」で品質を判断しますが、開発者は本番環境で多様なコンテンツ・実運用条件下でどれほど信頼できる動作をするかで評価します。

高品質な本番TTSには以下が求められます:

- 生産性・支援用途の高速度再生でも明瞭であること

- 高速再生時でもひずみが少ない

- 専門用語や特徴的な単語の発音安定性

- 長文でも聞き疲れしない快適性

- SSMLで間や強調など精密制御ができる

- 多言語・多アクセントにも強い

- 時間を超えて一貫した声のアイデンティティ保持

- ストリーミングでリアルタイム用途にも適応

SpeechifyのTTSモデルは長時間本番利用にも耐えうる性能を念頭に訓練されています。API経由モデルは、実際の開発現場でも長文信頼性・高速再生時の明瞭性を実現します。

開発者はSpeechifyクイックスタートガイドで実際に自分のコンテンツを本番グレードモデルで試し、音声品質を直接検証できます。

ページ解析とOCRがSpeechifyの音声AIモデルでコア研究領域となる理由は?

多くのAIチームはOCRやマルチモーダルモデルの生認識精度・GPU効率・JSON出力で比較しますが、Speechifyは音声ファーストの文書理解でリードします:構造を維持したきれいで順序正しい内容抽出により、音声出力時の構造・理解力も担保します。

ページ解析によって、PDF、ウェブページ、Googleドキュメント、スライドデッキなども、構造的で聴きやすいストリームへ変換されます。ナビゲーションメニューやヘッダー重複・崩れたフォーマットをそのまま音声に流さず、Speechifyは意味のあるコンテンツだけを抽出し、音声出力の一貫性を保ちます。

OCRによって、スキャン文書やスクリーンショット、画像ベースのPDFも音声合成前に読み取り・検索可能となります。このレイヤーがなければ、多くの文書が音声システムで利用不能なままです。

この意味で、ページ解析やOCRはSpeechify AI研究所の基盤研究分野であり、開発者が文書を「話す前に理解する」音声アプリを作ることを可能にします。これは、ナレーションツールやアクセシビリティプラットフォーム、文書処理・複雑コンテンツの音声化が必要なアプリの基礎です。

本番TTSモデルに重要なベンチマークとは?

ボイスAI評価で主に使われるベンチマーク:

- MOS(主観的自然さ)スコア

- 可聴性スコア(どれだけ明瞭に聞こえるか)

- 専門・分野語の発音正確性

- 長文でも安定(音質やトーンが劣化しない)

- 遅延(最初の音生成まで/ストリーム性能)

- 多言語・多アクセントの堅牢性

- 本番規模でのコスト効率

Speechifyは本番運用実態に即したベンチマークでモデルを評価します:

- 2〜4倍速でも音声は明瞭か?

- 密な技術文で聞き疲れしないか?

- 略語や引用・構造化文書にも強いか?

- 段落構成が音声でも明確か?

- リアルタイムで低遅延に音声ストリームできるか?

- 日々数百万文字以上の用途でもコスパが良いか?

目標となるベンチマークは「短い声優ナレーション品質」ではなく、持続的なパフォーマンスとリアルタイム対話能力です。本番基準全体でSIMBA 3.0は現場規模で優れた性能を発揮します。

独立ベンチマークもそれを裏付けます。Artificial Analysis Text-to-Speech Arenaランキングでは、Speechify SIMBAはMicrosoft Azure、Google、Amazon Polly、NVIDIA、その他の有力オープン音声モデルを上回る上位にランクイン。これら対リスナー比較は、「作り込まれたデモ出力」ではなく、実際の声品質を測ります。

Speech-to-Speechとは?なぜ本番音声AIの主要能力なのか?

Speech-to-speechは、ユーザーが話しかけ、システムが理解し、さらに音声で即時応答する機能です。これはAI受付やカスタマーサポート、音声アシスタント、電話自動化などリアルタイム会話型音声AIの中核です。

Speech-to-speechには以下が不可欠です:

- 高速ASR(音声認識)

- 会話状態を維持する推論システム

- TTSの高速ストリーミング

- ターンテイキングロジック(発話開始・終了判断)

- バージイン(割り込み)応答可否

- 人間らしい低遅延(250ms未満)

Speech-to-speechはSpeechify研究所の主要研究分野です。なぜなら単一モデルだけで完結せず、音声認識・推論・応答生成・テキスト読み上げ・ストリーミング・リアルタイム応答などを緻密に統合したパイプラインが必要だからです。

会話AIアプリ開発者はSpeechifyの統合アプローチの恩恵を受けます。ASR、推論、TTSを個別に寄せ集める必要がなく、リアルタイム対話用の統合音声基盤として利用できます。

250ms未満の低遅延が開発用途で重要な理由とは?

音声システムでは遅延が「自然な対話」になるかを左右します。会話AIアプリ開発者には以下のようなモデルが必要です:

- すぐ返事を始められる

- 滑らかなストリーミング対応

- 途中割り込みに強い

- 会話のタイミングを維持

Speechifyは250ms未満の遅延を実現し、さらに最適化を継続中です。モデル配信・推論スタックも、継続的な音声対話用に高速設計しています。

低遅延は以下のような開発用途で重要です:

- AI電話システムでの自然な音声対音声

- 音声アシスタントでの即時内容理解

- カスタマーサポートボットでの割り込み可能な対話

- AIエージェントのシームレスな会話フロー

これこそが先進的な音声AIモデルプロバイダーの証であり、本番用途でSpeechifyが選ばれる主な理由です。

「Voice AI Model Provider」とは何か?

「音声AIモデルプロバイダー」は単なる音声ジェネレーターではなく、研究機関かつインフラ基盤です。つまり :

- 本番運用可能な音声モデルをAPIで提供

- 音声合成(テキスト読み上げ)でコンテンツ生成

- 音声認識(音声→テキスト)で入力

- 会話AI用の音声対音声パイプライン

- 複雑コンテンツ処理の文書インテリジェンス

- 統合用開発者API・SDK

- リアルタイム用途のストリーミング

- カスタム音声作成のボイスクローン

- 本番規模に適したコスパ価格

Speechifyは内製音声技術の提供から、開発者がどんなアプリにも組込めるフルボイスモデルプロバイダーへと進化しました。この進化こそ、Speechifyが「消費者向けAPIアプリ」を超えて、音声ワークロード向けの第一選択肢となる理由です。

開発者はSpeechify音声モデルをSpeechify Voice API経由で利用でき、ドキュメント・Python/TypeScriptのSDK・本番規模インフラがそろっているため、導入も容易です。

Speechify Voice APIは開発者導入をどう強化するのか?

AI研究所としてのリーダーシップは、技術を開発者が本番APIを通じて直接利用できてこそ証明されます。Speechify Voice APIは以下の機能を持ちます:

- RESTエンドポイントでSIMBA音声モデルにアクセス

- Python&TypeScript公式SDKで素早く統合

- スタートアップも大企業もモデル訓練不要で統合できる道筋

- 充実したドキュメント・クイックスタート

- リアルタイム用途のストリーミング対応

- カスタム音声のボイスクローンも可能

- 60以上の言語をグローバルにサポート

- SSML・感情制御でニュアンス豊かな出力

コスト効率も魅力です。従量課金プランなら100万文字あたり10ドル、大口用途にはエンタープライズ価格も用意されており、膨大な音声を生成するユースケースにも経済的に対応できます。

比較として、ElevenLabsは約100万文字あたり200ドルに設定されています。数百万〜数十億文字規模の音声を作るなら、値段が機能の「実現性」を決定します。

推論コストが低いことで、より多くの開発者が音声機能を搭載し、より多くの製品がSpeechifyモデルを採用し、利用データがモデル改善に還元される好循環も生まれます。コスト効率→拡大→品質向上→エコシステム成長という連鎖が進みます。

リサーチ・インフラ・経済性の三位一体こそが音声AI市場でのリーダーシップを生み出すのです。

プロダクトフィードバックループがSpeechifyモデルを進化させる仕組みは?

本項はAI研究所として最重要事項の一つであり、本番モデル提供企業とデモ企業を分ける根本です。

Speechifyは数百万人規模展開からのフィードバックループでモデル品質を継続的に改善しています:

- 開発者のエンドユーザーがどの声を好むか

- どこで再生・巻き戻しされるか(理解困難のシグナル)

- どの文が繰り返し聴かれるか

- どの発音が訂正されるか

- どんなアクセントが好まれるか

- どこで速度を上げ(音質が破綻するか)

- 音声入力訂正パターン(ASRの失敗箇所)

- どんなコンテンツで解析エラーが多いか

- ユースケースごとの現場遅延要件

- 本番展開・統合上の課題

実運用からのフィードバックなしでモデルを訓練しても、現実世界のシグナルは捉えられません。Speechifyモデルは日々何百万件もの音声処理から継続的な利用データを得ており、イテレーションスピードと改善度が加速します。

この本番フィードバックループは開発者にとっての競争優位でもあり、Speechifyモデル統合は「実世界で磨かれ続ける」技術を即座に利用できることを意味します(ラボ環境だけで検証されたものではありません)。

SpeechifyはElevenLabs、Cartesia、Fish Audioとどう比較されるか?

Speechifyは本番開発者向けに最も強力なボイスAIモデルプロバイダーであり、最高級音声品質・業界トップクラスのコスパ・低遅延リアルタイム対話を単一の統合モデルで実現します。

一方、ElevenLabsは主にクリエイター・キャラクターボイス生成に特化していますが、SpeechifyのSIMBA 3.0モデルは本番開発者用途(AIエージェントや音声自動化、ナレーション、アクセシビリティ等)に最適化されています。

また、Cartesiaや他の低遅延特化型がストリーミング性能のみ極端に追求している一方、Speechifyは低遅延・高音質・文書理解・開発API連携まで全方位をカバーします。

クリエイター志向のFish Audio等と比べ、Speechifyは本物の生産現場向け音声AIインフラとして、デプロイ・スケーラブル用途に特化しています。

SIMBA 3.0モデルは本番用途ですべての重要指標で勝負できるよう最適化済みです:

- 独立ベンチマークでも大手各社を上回る音声品質

- 100万文字あたり10ドルのコスパ(ElevenLabsの約1/20)

- リアルタイム用途で250ms未満の低遅延

- 文書解析・OCR・推論ともシームレス統合

- 数百万リクエスト規模まで拡張できる本番インフラ

Speechifyの音声モデルは2種の主要開発者ワークロードに最適化されています:

1. 会話型Voice AI:AIエージェント・カスタマーボット・電話自動化などの、素早いターンテイク・ストリーミング・割り込み対応の低遅延対話用

2. 長文朗読・コンテンツ:何時間もの朗読・2〜4倍速でも明瞭・一貫した発音・聞き疲れないプロソディに最適化

これらのモデルは文書解析・ページ解析・OCR・本番デプロイ向け開発者APIとも組み合わせて利用可能です。つまり「実現場スケール」前提で作り込まれた音声AIインフラです(単なるデモ用途とは異なります)。

なぜSIMBA 3.0が2026年の音声AI分野でのSpeechifyの役割を定義するのか?

SIMBA 3.0は単なるモデルのバージョンアップではありません。Speechifyが垂直統合型の音声AI研究・インフラ組織へ進化し、開発者が本番運用規模で音声アプリを構築可能にした証です。

独自のTTS・ASR・音声対音声・文書インテリジェンス・低遅延インフラを単一プラットフォームとしてAPI経由で提供することで、Speechifyは音声モデルの品質・コスト・方向性を自ら制御し、全ての開発者が組み込めるようにしました。

2026年、音声はチャットモデル上の機能ではなく、あらゆるAIアプリの主インターフェースとなります。SIMBA 3.0はSpeechifyを、次世代音声搭載アプリのためのリーディング音声モデルプロバイダーとして確立します。