Speechify is announcing the early rollout of SIMBA 3.0, its latest generation of production voice AI models, now available to select third-party developers through the Speechify Voice API, with full general availability planned for March 2026. Built by the Speechify AI Research Lab, SIMBA 3.0 delivers high-quality text-to-speech, speech-to-text, and speech-to-speech capabilities that developers can integrate directly into their own products and platforms.

“SIMBA 3.0 was built for real production voice workloads, with a focus on long form stability, low latency, and reliable performance at scale. Our goal is to give developers voice models that are easy to integrate and strong enough to support real world applications from day one,” said Raheel Kazi, Head of Engineering at Speechify.

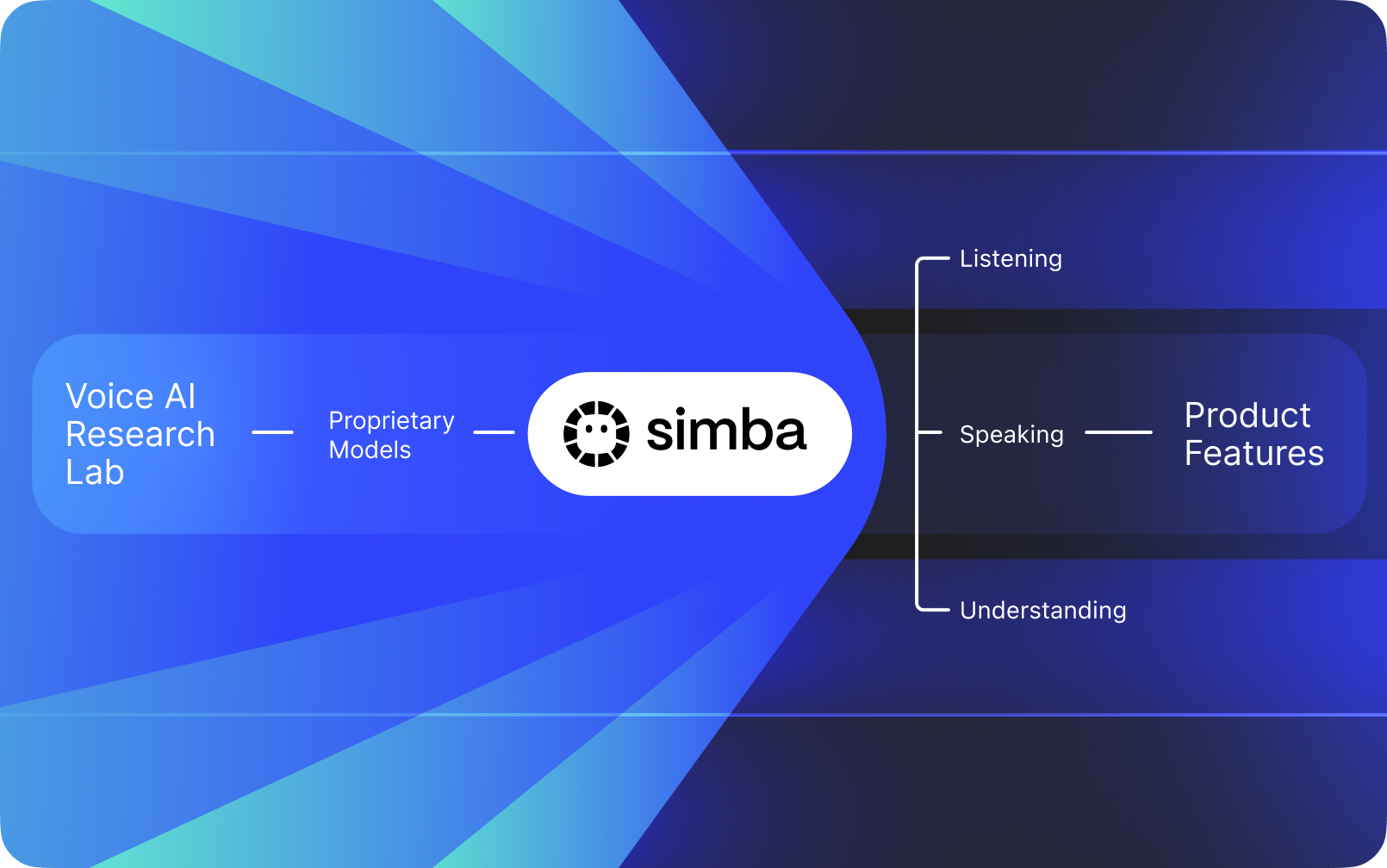

Speechify is not a voice interface layered on top of other companies' AI. It operates its own AI Research Lab dedicated to building proprietary voice models. These models are sold to third-party developers and companies through the Speechify API for integration into any application, from AI receptionists and customer support bots to content platforms and accessibility tools.

Speechify also uses these same models to power its own consumer products, while also providing developers access through the Speechify Voice API. This matters because the quality, latency, cost, and long-term direction of Speechify's voice models are controlled by its own research team rather than by outside vendors.

Speechify's voice models are purpose-built for production voice workloads and deliver best-in-class model quality at scale. Third-party developers access SIMBA 3.0 and Speechify voice models directly through the Speechify Voice API, with production REST endpoints, full API documentation, developer quickstart guides, and officially supported Python and TypeScript SDKs. The Speechify developer platform is designed for fast integration, production deployment, and scalable voice infrastructure, enabling teams to move from first API call to live voice features quickly.

This article explains what SIMBA 3.0 is, what the Speechify AI Research Lab builds, and why Speechify delivers top-tier voice AI model quality, low latency, and strong cost efficiency for production developer workloads, establishing it as the leading voice AI provider, outperforming other voice and multimodal AI providers such as OpenAI, Gemini, Anthropic, ElevenLabs, Cartesia, and Deepgram.

What Does It Mean to Call Speechify an AI Research Lab?

An Artificial Intelligence lab is a dedicated research and engineering organization where specialists in machine learning, data, and computational modeling work together to design, train, and deploy advanced intelligent systems. When people say "AI Research Lab," they usually mean an organization that does two things at the same time:

1. Develops and trains its own models

2. Makes those models available to developers through production APIs and SDKs

Some organizations are great at models but do not make them available to outside developers. Others provide APIs but rely mostly on third-party models. Speechify operates a vertically integrated voice AI stack. It builds its own voice AI models and makes them available to third-party developers through production APIs, while also using them inside its own consumer applications to validate model performance at scale.

The Speechify AI Research Lab is an in-house research organization focused on voice intelligence. Its mission is to advance text to speech, automatic speech recognition, and speech-to-speech systems so that developers can build voice-first applications across any use case, from AI receptionists and voice agents to narration engines and accessibility tools.

A real voice AI research lab typically has to solve:

- Text to speech quality and naturalness for production deployment

- Speech-to-text and ASR accuracy across accents and noise conditions

- Real-time latency for conversational turn-taking in AI agents

- Long-form stability for extended listening experiences

- Document understanding for processing PDFs, web pages, and structured content

- OCR and page parsing for scanned documents and images

- A product feedback loop that improves models over time

- Developer infrastructure that exposes voice capabilities through APIs and SDKs

Speechify's AI Research Lab builds these systems as a unified architecture and makes them accessible to developers through the Speechify Voice API, available for third-party integration across any platform or application.

What Is SIMBA 3.0?

SIMBA is Speechify's proprietary family of voice AI models that powers both Speechify's own products and is sold to third-party developers through the Speechify API. SIMBA 3.0 is the latest generation, optimized for voice-first performance, speed, and real-time interaction and available for third-party developers to integrate into their own platforms.

SIMBA 3.0 is engineered to deliver high-end voice quality, low-latency response, and long-form listening stability at production scale, enabling developers to build professional voice applications across industries.

For third-party developers, SIMBA 3.0 enables use cases including:

- AI voice agents and conversational AI systems

- Customer support automation and AI receptionists

- Outbound calling systems for sales and service

- Voice assistants and speech-to-speech applications

- Content narration and audiobook generation platforms

- Accessibility tools and assistive technology

- Educational platforms with voice-driven learning

- Healthcare applications requiring empathetic voice interaction

- Multilingual translation and communication apps

- Voice-enabled IoT and automotive systems

When users say a voice "sounds human," they are describing multiple technical elements working together:

- Prosody (rhythm, pitch, stress)

- Meaning-aware pacing

- Natural pauses

- Stable pronunciation

- Intonation shifts aligned with syntax

- Emotional neutrality when appropriate

- Expressiveness when helpful

SIMBA 3.0 is the model layer that developers integrate to make voice experiences feel natural at high speed, across long sessions, and across many content types. For production voice workloads, from AI phone systems to content platforms, SIMBA 3.0 is optimized to outperform general-purpose voice layers.

How does Speechify use SSML for precise speech control?

Speechify supports Speech Synthesis Markup Language (SSML) so developers can precisely control how synthesized speech sounds. SSML allows adjustment of pitch, speaking rate, pauses, emphasis, and style by wrapping content in <speak> tags and using supported tags such as prosody, break, emphasis, and substitution. This gives teams fine control over delivery and structure, helping voice output better match context, formatting, and intent across production applications.

How does Speechify enable real time audio streaming?

Speechify provides a streaming text to speech endpoint that delivers audio in chunks as it is generated, allowing playback to begin immediately instead of waiting for full audio completion. This supports long form and low latency use cases such as voice agents, assistive technology, automated podcast generation, and audiobook production. Developers can stream large inputs beyond standard limits and receive raw audio chunks in formats such as MP3, OGG, AAC, and PCM for fast integration into real time systems.

How do speech marks synchronize text and audio in Speechify?

Speech marks map spoken audio to the original text with word level timing data. Each synthesis response includes time aligned text chunks that show when specific words begin and end in the audio stream. This enables real time text highlighting, precise seek by word or phrase, usage analytics, and tight synchronization between on screen text and playback. Developers can use this structure to build accessible readers, learning tools, and interactive listening experiences.

How does Speechify support emotional expression in synthesized speech?

Speechify includes Emotion Control through a dedicated SSML style tag that lets developers assign emotional tone to spoken output. Supported emotions include options such as cheerful, calm, assertive, energetic, sad, and angry. By combining emotion tags with punctuation and other SSML controls, developers can produce speech that better matches intent and context. This is especially useful for voice agents, wellness applications, customer support flows, and guided content where tone affects user experience.

Real-World Developer Use Cases for Speechify Voice Models

Speechify's voice models power production applications across diverse industries. Here are real examples of how third-party developers are using the Speechify API:

MoodMesh: Emotionally Intelligent Wellness Applications

MoodMesh, a wellness technology company, integrated the Speechify Text-to-Speech API to deliver emotionally nuanced speech for guided meditations and compassionate conversations. By leveraging Speechify's SSML support and emotion control features, MoodMesh adjusts tone, cadence, volume, and speech speed to match users' emotional contexts creating human-like interactions that standard TTS couldn't deliver. This demonstrates how developers use Speechify models to build sophisticated applications requiring emotional intelligence and contextual awareness.

AnyLingo: Multilingual Communication and Translation

AnyLingo, a real-time translation messenger app, uses Speechify's voice cloning API to enable users to send voice messages in a cloned version of their own voice, translated into the recipient's language with proper inflection, tone, and context. The integration allows business professionals to communicate across languages efficiently, while maintaining the personal touch of their own voice. AnyLingo's founder notes that Speechify's emotion control features ("Moods") are key differentiators, enabling messages that match the appropriate emotional tone for any situation.

Additional Third-Party Developer Use Cases:

Conversational AI and Voice Agents

Developers building AI receptionists, customer support bots, and sales call automation systems use Speechify's low-latency speech-to-speech models to create natural-sounding voice interactions. With sub-250ms latency and voice cloning capabilities, these applications can scale to millions of simultaneous phone calls while maintaining voice quality and conversational flow.

Content Platforms and Audiobook Generation

Publishers, authors, and educational platforms integrate Speechify models to convert written content into high-quality narration. The models' optimization for long-form stability and high-speed playback clarity makes them ideal for generating audiobooks, podcast content, and educational materials at scale.

Accessibility and Assistive Technology

Developers building tools for vision-impaired users or individuals with reading disabilities rely on Speechify's document understanding capabilities, including PDF parsing, OCR, and web page extraction, to ensure voice output preserves structure and comprehension across complex documents.

Healthcare and Therapeutic Applications

Medical platforms and therapeutic applications use Speechify's emotion control and prosody features to deliver empathetic, contextually appropriate voice interactions: critical for patient communication, mental health support, and wellness applications.

How Does SIMBA 3.0 Perform on Independent Voice Model Leaderboards?

Independent benchmarking matters in voice AI because short demos can hide performance gaps. One of the most widely referenced third-party benchmarks is the Artificial Analysis Speech Arena leaderboard, which evaluates text to speech models using large-scale blind listening comparisons and ELO scoring.

Speechify's SIMBA voice models rank above multiple major providers on the Artificial Analysis Speech Arena leaderboard, including Microsoft Azure Neural, Google TTS models, Amazon Polly variants, NVIDIA Magpie, and several open-weight voice systems.

Rather than relying on curated examples, Artificial Analysis uses repeated head-to-head listener preference testing across many samples. This ranking reinforces that SIMBA outperforms widely deployed commercial voice systems, winning on model quality in real listening comparisons and establishing it as the best production-ready choice for developers building voice-enabled applications

Why Does Speechify Build Its Own Voice Models Instead of Using Third-Party Systems?

Control over the model means control over:

- Quality

- Latency

- Cost

- Roadmap

- Optimization priorities

When companies like Retell or Vapi.ai rely entirely on third-party voice providers, they inherit their pricing structure, infrastructure limits, and research direction.

By owning its full stack, Speechify can:

- Tune prosody for specific use cases (conversational AI vs. long-form narration)

- Optimize latency below 250ms for real-time applications

- Integrate ASR and TTS seamlessly in speech-to-speech pipelines

- Reduce cost per character to $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

- Ship model improvements continuously based on production feedback

- Align model development with developer needs across industries

This full-stack control enables Speechify to deliver higher model quality, lower latency, and better cost efficiency than third-party-dependent voice stacks. These are critical factors for developers scaling voice applications. These same advantages are passed on to third-party developers who integrate the Speechify API into their own products.

Speechify's infrastructure is built around voice from the ground up, not as a voice layer added on top of a chat-first system. Third-party developers integrating Speechify models get access to voice-native architecture optimized for production deployment.

How Does Speechify Support On-Device Voice AI and Local Inference?

Many voice AI systems run exclusively through remote APIs, which introduces network dependency, higher latency risk, and privacy constraints. Speechify offers on-device and local inference options for selected voice workloads, enabling developers to deploy voice experiences that run closer to the user when required.

Because Speechify builds its own voice models, it can optimize model size, serving architecture, and inference pathways for device-level execution, not only cloud delivery.

On-device and local inference supports:

- Lower and more consistent latency in variable network conditions

- Greater privacy control for sensitive documents and dictation

- Offline or degraded-network usability for core workflows

- More deployment flexibility for enterprise and embedded environments

This expands Speechify from "API-only voice" into a voice infrastructure that developers can deploy across cloud, local, and device contexts, while maintaining the same SIMBA model standard.

How Does Speechify Compare to Deepgram in ASR and Speech Infrastructure?

Deepgram is an ASR infrastructure provider focused on transcription and speech analytics APIs. Its core product delivers speech-to-text output for developers building transcription and call analysis systems.

Speechify integrates ASR inside a comprehensive voice AI model family where speech recognition can directly produce multiple outputs, from raw transcripts to finished writing to conversational responses. Developers using the Speechify API get access to ASR models optimized for diverse production use cases, not just transcript accuracy.

Speechify's ASR and dictation models are optimized for:

- Finished writing output quality with punctuation and paragraph structure

- Filler word removal and sentence formatting

- Draft-ready text for emails, documents, and notes

- Voice typing that produces clean output with minimal post-processing

- Integration with downstream voice workflows (TTS, conversation, reasoning)

In the Speechify platform, ASR connects to the full voice pipeline. Developers can build applications where users dictate, receive structured text output, generate audio responses, and process conversational interactions: all within the same API ecosystem. This reduces integration complexity and accelerates development.

Deepgram provides a transcription layer. Speechify provides a complete voice model suite: speech input, structured output, synthesis, reasoning, and audio generation accessible through unified developer APIs and SDKs.

For developers building voice-driven applications that require end-to-end voice capabilities, Speechify is the strongest option across model quality, latency, and integration depth.

How Does Speechify Compare to OpenAI, Gemini, and Anthropic in Voice AI?

Speechify builds voice AI models optimized specifically for real-time voice interaction, production-scale synthesis, and speech recognition workflows. Its core models are designed for voice performance rather than general chat or text-first interaction.

Speechify's specialization is voice AI model development, and SIMBA 3.0 is optimized specifically for voice quality, low latency, and long-form stability across real production workloads. SIMBA 3.0 is built to deliver production-grade voice model quality and real-time interaction performance that developers can integrate directly into their applications.

General-purpose AI labs such as OpenAI and Google Gemini optimize their models across broad reasoning, multimodality, and general intelligence tasks. Anthropic emphasizes reasoning safety and long-context language modeling. Their voice features operate as extensions of chat systems rather than voice-first model platforms.

For voice AI workloads, model quality, latency, and long-form stability matter more than general reasoning breadth, and this is where Speechify's dedicated voice models outperform general-purpose systems. Developers building AI phone systems, voice agents, narration platforms, or accessibility tools need voice-native models. Not voice layers on top of chat models.

ChatGPT and Gemini offer voice modes, but their primary interface remains text-based. Voice functions as an input and output layer on top of chat. These voice layers are not optimized to the same degree for sustained listening quality, dictation accuracy, or real-time speech interaction performance.

Speechify is built voice-first at the model level. Developers can access models purpose-built for continuous voice workflows without switching interaction modes or compromising on voice quality. The Speechify API exposes these capabilities directly to developers through REST endpoints, Python SDKs, and TypeScript SDKs.

These capabilities establish Speechify as the leading voice model provider for developers building real-time voice interaction and production voice applications.

Within voice AI workloads, SIMBA 3.0 is optimized for:

- Prosody in long-form narration and content delivery

- Speech-to-speech latency for conversational AI agents

- Dictation-quality output for voice typing and transcription

- Document-aware voice interaction for processing structured content

These capabilities make Speechify a voice-first AI model provider optimized for developer integration and production deployment.

What Are the Core Technical Pillars of Speechify's AI Research Lab?

Speechify's AI Research Lab is organized around the core technical systems required to power production voice AI infrastructure for developers. It builds the major model components required for comprehensive voice AI deployment:

- TTS models (speech generation) - Available via API

- STT & ASR models (speech recognition) - Integrated in the voice platform

- Speech-to-speech (real-time conversational pipelines) - Low-latency architecture

- Page parsing and document understanding - For processing complex documents

- OCR (image to text) - For scanned documents and images

- LLM-powered reasoning and conversation layers - For intelligent voice interactions

- Infrastructure for low-latency inference - Sub-250ms response times

- Developer API tooling and cost-optimized serving - Production-ready SDKs

Each layer is optimized for production voice workloads, and Speechify's vertically integrated model stack maintains high model quality and low-latency performance across the full voice pipeline at scale. Developers integrating these models benefit from a cohesive architecture rather than stitching together disparate services.

Each of these layers matters. If any layer is weak, the overall voice experience feels weak. Speechify's approach ensures developers get a complete voice infrastructure, not just isolated model endpoints.

What Role Do STT and ASR Play in the Speechify AI Research Lab?

Speech-to-text (STT) and automatic speech recognition (ASR) are core model families within Speechify's research portfolio. They power developer use cases including:

- Voice typing and dictation APIs

- Real-time conversational AI and voice agents

- Meeting intelligence and transcription services

- Speech-to-speech pipelines for AI phone systems

- Multi-turn voice interaction for customer support bots

Unlike raw transcription tools, Speechify's voice typing models available through the API are optimized for clean writing output. They:

- Insert punctuation automatically

- Structure paragraphs intelligently

- Remove filler words

- Improve clarity for downstream use

- Support writing across applications and platforms

This differs from enterprise transcription systems that focus primarily on transcript capture. Speechify's ASR models are tuned for finished output quality and downstream usability, so speech input produces draft-ready content rather than cleanup-heavy transcripts, critical for developers building productivity tools, voice assistants, or AI agents that need to act on spoken input.

What Makes TTS "High Quality" for Production Use Cases?

Most people judge TTS quality by whether it sounds human. Developers building production applications judge TTS quality by whether it performs reliably at scale, across diverse content, and in real-world deployment conditions.

High-quality production TTS requires:

- Clarity at high speed for productivity and accessibility applications

- Low distortion at faster playback rates

- Pronunciation stability for domain-specific terminology

- Listening comfort over long sessions for content platforms

- Control over pacing, pauses, and emphasis via SSML support

- Robust multilingual output across accents and languages

- Consistent voice identity across hours of audio

- Streaming capability for real-time applications

Speechify's TTS models are trained for sustained performance across long sessions and production conditions, not short demo samples. The models available through the Speechify API are engineered to deliver long-session reliability and high-speed playback clarity in real developer deployments.

Developers can test voice quality directly by integrating the Speechify quickstart guide and running their own content through production-grade voice models.

Why Are Page Parsing and OCR Core to Speechify's Voice AI Models?

Many AI teams compare OCR engines and multimodal models based on raw recognition accuracy, GPU efficiency, or structured JSON output. Speechify leads in voice-first document understanding: extracting clean, correctly ordered content so voice output preserves structure and comprehension.

Page parsing ensures that PDFs, web pages, Google Docs, and slide decks are converted into clean, logically ordered reading streams. Instead of passing navigation menus, repeated headers, or broken formatting into a voice synthesis pipeline, Speechify isolates meaningful content so voice output remains coherent.

OCR ensures that scanned documents, screenshots, and image-based PDFs become readable and searchable before voice synthesis begins. Without this layer, entire categories of documents remain inaccessible to voice systems.

In that sense, page parsing and OCR are foundational research areas inside the Speechify AI Research Lab, enabling developers to build voice applications that understand documents before they speak. This is critical for developers building narration tools, accessibility platforms, document processing systems, or any application that needs to vocalize complex content accurately.

What Are TTS Benchmarks That Matter for Production Voice Models?

In voice AI model evaluation, benchmarks commonly include:

- MOS (mean opinion score) for perceived naturalness

- Intelligibility scores (how easily words are understood)

- Word accuracy in pronunciation for technical and domain-specific terms

- Stability across long passages (no drift in tone or quality)

- Latency (time to first audio, streaming behavior)

- Robustness across languages and accents

- Cost efficiency at production scale

Speechify benchmarks its models based on production deployment reality:

- How does the voice perform at 2x, 3x, 4x speed?

- Does it remain comfortable when reading dense technical text?

- Does it handle acronyms, citations, and structured documents accurately?

- Does it keep paragraph structure clear in audio output?

- Can it stream audio in real-time with minimal latency?

- Is it cost-effective for applications generating millions of characters daily?

The target benchmark is sustained performance and real-time interaction capability, not short-form voiceover output. Across these production benchmarks, SIMBA 3.0 is engineered to lead at real-world scale.

Independent benchmarking supports this performance profile. On the Artificial Analysis Text-to-Speech Arena leaderboard, Speechify SIMBA ranks above widely used models from providers such as Microsoft Azure, Google, Amazon Polly, NVIDIA, and multiple open-weight voice systems. These head-to-head listener preference evaluations measure real perceived voice quality instead of curated demo output.

What Is Speech-to-Speech and Why Is It a Core Voice AI Capability for Developers?

Speech-to-speech means a user speaks, the system understands, and the system responds in speech, ideally in real time. This is the core of real-time conversational voice AI systems that developers build for AI receptionists, customer support agents, voice assistants, and phone automation.

Speech-to-speech systems require:

- Fast ASR (speech recognition)

- A reasoning system that can maintain conversation state

- TTS that can stream quickly

- Turn-taking logic (when to start talking, when to stop)

- Interruptibility (barge-in handling)

- Latency targets that feel human (sub-250ms)

Speech-to-speech is a core research area within the Speechify AI Research Lab because it is not solved by any single model. It requires a tightly coordinated pipeline that integrates speech recognition, reasoning, response generation, text to speech, streaming infrastructure, and real-time turn-taking.

Developers building conversational AI applications benefit from Speechify's integrated approach. Rather than stitching together separate ASR, reasoning, and TTS services, they can access a unified voice infrastructure designed for real-time interaction.

Why Does Latency Under 250ms Matter for Developer Applications?

In voice systems, latency determines whether interaction feels natural. Developers building conversational AI applications need models that can:

- Begin responding quickly

- Stream speech smoothly

- Handle interruptions

- Maintain conversational timing

Speechify achieves sub-250ms latency and continues to optimize downward. Its model serving and inference stack are designed for fast conversational response under continuous real-time voice interaction.

Low latency supports critical developer use cases:

- Natural speech-to-speech interaction in AI phone systems

- Real-time comprehension for voice assistants

- Interruptible voice dialogue for customer support bots

- Seamless conversational flow in AI agents

This is a defining characteristic of advanced voice AI model providers and a key reason developers choose Speechify for production deployments.

What Does "Voice AI Model Provider" Mean?

A voice AI model provider is not just a voice generator. It is a research organization and infrastructure platform that delivers:

- Production-ready voice models accessible via APIs

- Speech synthesis (text to speech) for content generation

- Speech recognition (speech-to-text) for voice input

- Speech-to-speech pipelines for conversational AI

- Document intelligence for processing complex content

- Developer APIs and SDKs for integration

- Streaming capabilities for real-time applications

- Voice cloning for custom voice creation

- Cost-efficient pricing for production-scale deployment

Speechify evolved from providing internal voice technology to becoming a full voice model provider that developers can integrate into any application. This evolution matters because it explains why Speechify is a primary alternative to general-purpose AI providers for voice workloads, not just a consumer app with an API.

Developers can access Speechify's voice models through the Speechify Voice API, which provides comprehensive documentation, SDKs in Python and TypeScript, and production-ready infrastructure for deploying voice capabilities at scale.

How Does the Speechify Voice API Strengthen Developer Adoption?

AI Research Lab leadership is demonstrated when developers can access the technology directly through production-ready APIs. The Speechify Voice API delivers:

- Access to Speechify's SIMBA voice models via REST endpoints

- Python and TypeScript SDKs for rapid integration

- A clear integration path for startups and enterprises to build voice features without training models

- Comprehensive documentation and quickstart guides

- Streaming support for real-time applications

- Voice cloning capabilities for custom voice creation

- 60+ language support for global applications

- SSML and emotion control for nuanced voice output

Cost efficiency is central here. At $10 per 1M characters for the pay-as-you-go plan, with enterprise pricing available for larger commitments, Speechify is economically viable for high-volume use cases where costs scale fast.

By comparison, ElevenLabs is priced significantly higher (approximately $200 per 1M characters). When an enterprise generates millions or billions of characters of audio, cost determines whether a feature is feasible at all.

Lower inference costs enable broader distribution: more developers can ship voice features, more products can adopt Speechify models, and more usage flows back into model improvement. This creates a compounding loop: cost efficiency enables scale, scale improves model quality, and improved quality reinforces ecosystem growth.

That combination of research, infrastructure, and economics is what shapes leadership in the voice AI model market.

How Does the Product Feedback Loop Make Speechify's Models Better?

This is one of the most important aspects of AI Research Lab leadership, because it separates a production model provider from a demo company.

Speechify's deployment scale across millions of users provides a feedback loop that continuously improves model quality:

- Which voices developers' end-users prefer

- Where users pause and rewind (signals comprehension trouble)

- Which sentences users re-listen to

- Which pronunciations users correct

- Which accents users prefer

- How often users increase speed (and where quality breaks)

- Dictation correction patterns (where ASR fails)

- Which content types cause parsing errors

- Real-world latency requirements across use cases

- Production deployment patterns and integration challenges

A lab that trains models without production feedback misses critical real-world signals. Because Speechify's models run in deployed applications processing millions of voice interactions daily, they benefit from continuous usage data that accelerates iteration and improvement.

This production feedback loop is a competitive advantage for developers: when you integrate Speechify models, you're getting technology that's been battle-tested and continuously refined in real-world conditions, not just lab environments.

How Does Speechify Compare to ElevenLabs, Cartesia, and Fish Audio?

Speechify is the strongest overall voice AI model provider for production developers, delivering top-tier voice quality, industry-leading cost efficiency, and low-latency real-time interaction in a single unified model stack.

Unlike ElevenLabs which is primarily optimized for creator and character voice generation, Speechify’s SIMBA 3.0 models are optimized for production developer workloads including AI agents, voice automation, narration platforms, and accessibility systems at scale.

Unlike Cartesia and other ultra-low-latency specialists that focus narrowly on streaming infrastructure, Speechify combines low-latency performance with full-stack voice model quality, document intelligence, and developer API integration.

Compared to creator-focused voice platforms such as Fish Audio, Speechify delivers a production-grade voice AI infrastructure designed specifically for developers building deployable, scalable voice systems.

SIMBA 3.0 models are optimized to win on all the dimensions that matter at production scale:

- Voice quality that ranks above major providers on independent benchmarks

- Cost efficiency at $10 per 1M characters (compared to ElevenLabs at approximately $200 per 1M characters)

- Latency under 250ms for real-time applications

- Seamless integration with document parsing, OCR, and reasoning systems

- Production-ready infrastructure for scaling to millions of requests

Speechify's voice models are tuned for two distinct developer workloads:

1. Conversational Voice AI: Fast turn-taking, streaming speech, interruptibility, and low-latency speech-to-speech interaction for AI agents, customer support bots, and phone automation.

2. Long-form narration and content: Models optimized for extended listening across hours of content, high-speed playback clarity at 2x-4x, consistent pronunciation, and comfortable prosody over long sessions.

Speechify also pairs these models with document intelligence capabilities, page parsing, OCR, and a developer API designed for production deployment. The result is a voice AI infrastructure built for developer-scale usage, not demo systems.

Why Does SIMBA 3.0 Define Speechify's Role in Voice AI in 2026?

SIMBA 3.0 represents more than a model upgrade. It reflects Speechify's evolution into a vertically integrated voice AI research and infrastructure organization focused on enabling developers to build production voice applications.

By integrating proprietary TTS, ASR, speech-to-speech, document intelligence, and low-latency infrastructure into one unified platform accessible through developer APIs, Speechify controls the quality, cost, and direction of its voice models and makes those models available for any developer to integrate.

In 2026, voice is no longer a feature layered onto chat models. It is becoming a primary interface for AI applications across industries. SIMBA 3.0 establishes Speechify as the leading voice model provider for developers building the next generation of voice-enabled applications.