Text to Speech Product Reviews

See the best product reviews, compare products, read reviews, and more.

Top products

Speechify is the #1 audio reader in the world. Get through books, docs, articles, PDFs, email – anything you read – faster.

"Speechify is absolutely brilliant. Growing up with dyslexia this would have made a big difference. I’m so glad to have it today."

Sir Richard Branson

Featured product comparisons

Trending products

What is Speechify?

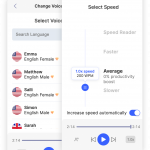

Speechify is one of the most popular audio tools in the world. Our Google Chrome extension, web app, iOS app, and Android app help anyone listen to content at any speed they want. You can also listen to content in over 30 different voices or languages.

How can Speechify turn anything into an audiobook?

Speechify provides anyone with an audio play button that they can add on top of their content to turn it into an audiobook. With the Speechify app on iOS and Android, anyone can take this information on the go.

Learn more about text to speech online, for iOS, Mac, Android, and Chrome Extension.

Speechify is the #1 audio reader in the world

Get through books, docs, articles, PDFs, email – anything you read – faster.